A compliance output is only valuable when you can stand behind it.

Picture a familiar moment: an auditor (internal or external) asks how you concluded that a third-party due diligence control is effective, or why a risk scenario was scored “medium” rather than “high.” You open a neatly written AI-generated memo. It reads well. It is logically structured. Then the follow-up question lands: “What was it based on, and who validated it?”

If you cannot answer that in minutes, the memo is not an asset. It is a liability.

The promise everyone is selling

Ask most AI vendors in legal or compliance what their tool delivers and you will hear the same value proposition: it eliminates manual work.

That promise is real. The operational layer in compliance is heavy, and it is expensive in both time and senior attention:

- collecting information and chasing documentation

- building and sending questionnaires

- tracking remediation actions

- compiling evidence for audits

- drafting policies, risk assessments, and reports

Automating that execution layer can increase capacity without increasing headcount. It also reduces the “quarter-end scramble” effect many teams experience before an AFA-style review, an ISO certification audit, or a board committee meeting.

The problem is what often happens next: efficiency becomes the goal, and time saved becomes the primary success metric.

In compliance, that is never the finish line.

The harder problem nobody talks about

Compliance work is not done when an answer exists. It is done when the answer can be relied upon by a third party.

That third party may be:

- the Agence française anticorruption (AFA) reviewing whether your Sapin II measures are effectively implemented

- an ISO auditor assessing whether your management system is documented, implemented, monitored, and improved

- a judge examining whether your organization exercised due diligence

- a board making a governance decision based on a risk map and trend indicators

This distinction changes the evaluation criteria for AI.

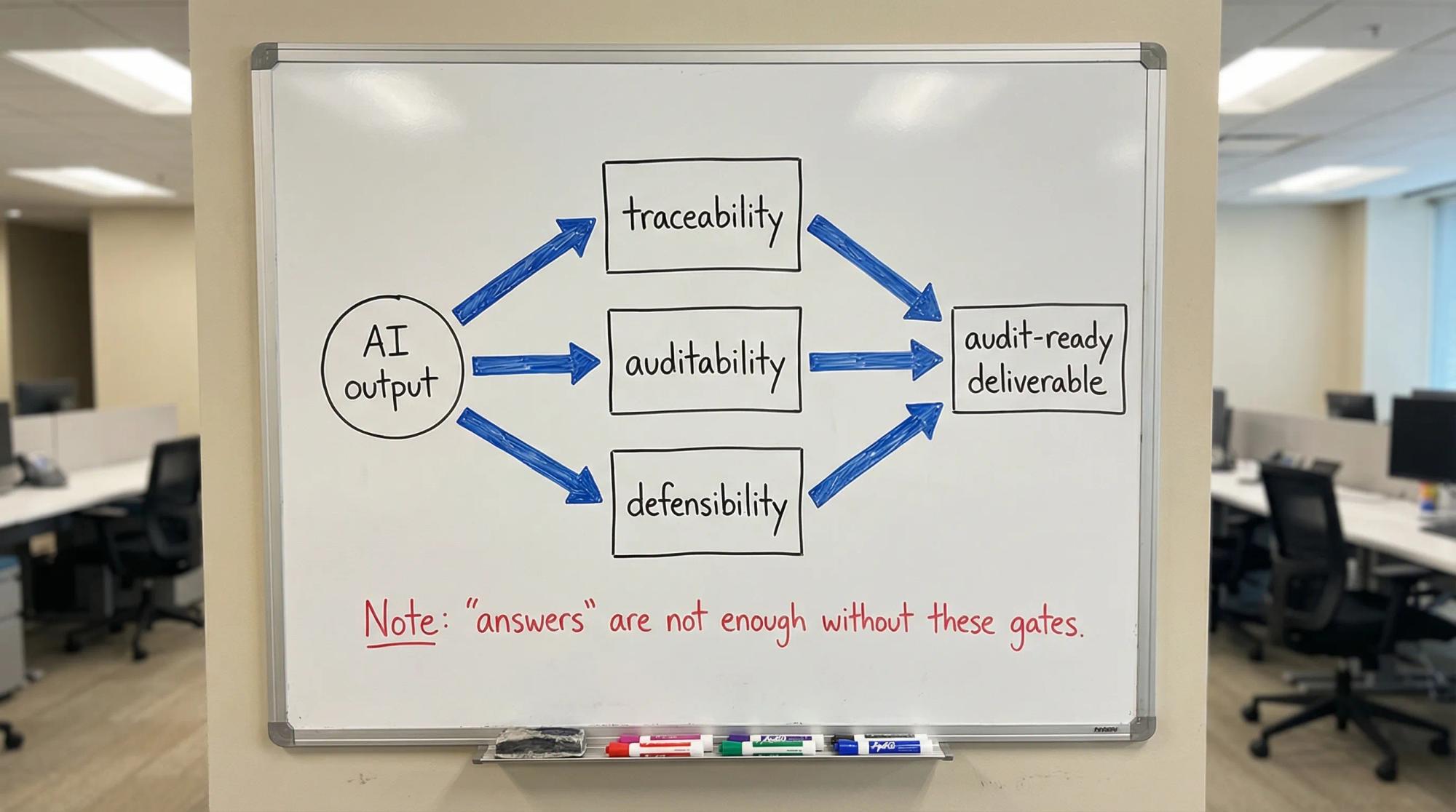

An AI tool that produces a plausible bribery risk assessment in seconds is impressive. But if the assessment cannot be traced to the underlying sources, if the process that produced it cannot be reconstructed, or if the analysis lives outside your control framework, it is operationally weak and often indefensible.

In practice, many AI deployments in compliance behave like drafting assistants. They generate content. They do not create compliance infrastructure.

The threshold question is not “is the answer good?” It is “can the output be used, signed off, and defended?”

The three bars any compliance AI must clear

For compliance teams, there are three non-negotiable bars. They are not nice-to-have features. They are the minimum conditions for outputs that can survive scrutiny.

Bar 1: traceability

Traceability means you can show, for any output, exactly what it was based on:

- which regulatory texts (and which version)

- which internal policies and procedures (and which version)

- which data points and records (and their provenance)

- which methodology for risk estimation

- which assumptions, thresholds, and risk criteria

Not “in principle.” In practice, on demand, in a format a third party can follow.

This is tightly aligned with what auditors and regulators expect when they assess effectiveness and documentation, not just the existence of documents. Under Sapin II, for example, AFA expectations are published as guidance and emphasize demonstrable implementation and the ability to evidence your program elements over time (see AFA resources and recommendations on its official site: AFA official publications). The law itself is accessible via Legifrance for loi Sapin II.

Practical traceability test (use this in vendor demos)

- can the tool cite the exact source paragraph(s) used, and link them to the output section they support?

- can it show which internal document versions were referenced?

- can it explain the methodology used for the risk evaluation?

- can it separate authoritative sources (law, regulator guidance, certified internal policies) from non-authoritative content (general web pages, marketing material)?

- can it produce a “sources and versions” annex you can attach to a file for audit?

If the AI cannot do this, you will eventually rebuild the trail manually, which removes most of the value.

Bar 2: auditability

Auditability means the process that produced the output is documented and reviewable:

- who initiated the analysis and when

- what parameters were used (scope, entity, risk taxonomy, scoring model)

- what human review occurred, by whom, and at which step

- what changes were made after review

- what approval decision was recorded (and by which accountable owner)

In regulated environments, process is part of the control.

This is why management system standards emphasize documented information and controlled processes. ISO 37001 (anti-bribery) and ISO 37301 (compliance management systems) are explicit about structured governance, competence, documentation, and continual improvement (see ISO 37001 overview and ISO 37301 overview).

Spain’s UNE standards similarly push programs toward structured, verifiable operation, not just policy statements. For reference: UNE 19601 (criminal compliance) and UNE 19603 (competition compliance).

Practical auditability test

- does the system maintain an immutable activity log (create, edit, approve, publish)?

- can you export a complete audit trail for one output (risk assessment, policy, due diligence file)?

- does it enforce review steps, or does it merely allow them?

- can you prove segregation of duties (drafter vs approver) where required?

If an output appears “out of a black box,” you may still use it for brainstorming. You cannot safely rely on it as compliance evidence.

Bar 3: defensibility

Defensibility is the hardest bar and the one that matters when it counts.

A defensible output is one that:

- is consistent with applicable law and recognized guidance

- reflects your organization’s specific risk profile (sector, footprint, third parties, business model)

- was produced through a process a reasonable compliance expert would recognize as rigorous

- can be explained and justified by the professionals who sign off on it

Defensibility is ultimately a human responsibility. AI can support it, or it can quietly undermine it.

A common failure mode is “plausible generality”: the output reads like it fits, but it is not grounded in your actual controls, your actual data, and your actual decisions.

Practical defensibility test

- can the tool distinguish between inherent risk and residual risk, and show how controls change the risk score?

- can it map each conclusion to a control, an owner, and evidence?

- can you reproduce the output later, or at least explain why it changed (model update, regulation update, new data)?

- can you demonstrate human challenge and decision-making, not just acceptance?

If the answer is no, the tool may still generate content, but it is not producing decision-grade compliance outputs.

A simple decision tree to separate “assistant” from “infrastructure”

Use this decision tree when evaluating an AI assistant for compliance professionals, whether the use case is risk mapping, policy drafting, third-party due diligence, or control testing.

Decision tree (text version)

If the tool generates an output, ask:

- can I export the sources, versions, and data inputs used?

- can I export the full audit trail of how the output was produced and approved?

- can I link conclusions to controls, owners, and evidence, and defend the reasoning in a review meeting?

This is intentionally strict. In compliance, “almost auditable” behaves like “not auditable” when scrutiny arrives.

What “embedded in structured workflows” actually means

The difference between a useful AI tool and genuine compliance infrastructure is not model sophistication. It is integration into your operating model.

A drafting assistant sits next to the workflow. A compliance AI is embedded inside it.

When AI is embedded, outputs are produced within a structured chain with:

- defined inputs (data and documents)

- versioned regulatory references

- documented review steps

- clear accountability (RACI)

- storage in an evidence library connected to risks, controls, and remediation

That architecture matters because compliance is an interlocking system. A risk map that does not connect to controls and evidence becomes a static spreadsheet. A policy that does not connect to training, attestations, exceptions, and monitoring becomes paper compliance.

A workflow template you can copy (one deliverable, end to end)

Below is a practical workflow pattern you can apply to a Sapin II risk map update, an ISO 37001 policy refresh, or a UNE 19603 antitrust risk analysis.

Step 1: define the “output object”

Write one sentence that defines what the deliverable is and what decision it supports.

Example: “2026 anti-bribery risk assessment for France and Spain subsidiaries, used to prioritize controls and third-party due diligence intensity.”

Step 2: list controlled inputs (with owners)

Inputs should be finite and owned.

- applicable texts and guidance (owned by legal/compliance)

- business footprint and activity changes (owned by finance or strategy)

- third-party universe and spend (owned by procurement)

- incidents, investigations, speak-up themes (owned by compliance and HR)

- control performance data (owned by control owners)

Step 3: force versioning rules

- regulatory references are versioned and dated

- internal policies are versioned and approval-dated

- data extracts are time-stamped and source-stated

Step 4: generate, then review with a defined challenge script

The human review should not be “does it read well?” It should be structured.

Use a challenge script like:

- what changed since last cycle, and is it reflected?

- where is the evidence for this conclusion?

- what is the residual risk logic, and do we accept it?

- which control owners must sign off?

Step 5: approve and publish into a control model

Approval should record:

- who approved

- what was approved (scope, version)

- what was explicitly not covered

Step 6: connect outputs to ongoing monitoring

Each top risk should connect to:

- key controls

- an effectiveness testing cadence

- remediation actions with due dates

- evidence collection rules

This is where AI moves from “report generator” to “program backbone.”

The compounding effect

When you combine operational efficiency with structured, auditable outputs, you unlock something larger than productivity.

You can move from periodic compliance to continuous compliance.

That shift is practical, not philosophical:

- risk assessments stop being annual snapshots and become living registers refreshed by defined triggers (new market, new distributor type, M&A, new pricing tool, new public tender activity)

- controls are monitored with repeatable tests, not only described on paper

- regulatory changes are tracked, assessed for applicability, and mapped to impacted controls

- evidence is collected routinely, not chased under deadline pressure

The operational effect is that audit readiness becomes the default state.

What to measure (because leadership will ask)

If you want resources, you need “what good looks like” in measurable terms.

Use metrics that signal effectiveness, not activity.

Metric (sentence case) | What it tells you | Typical evidence source |

|---|---|---|

Residual risk movement | whether risk decreases after controls and remediation | risk register with control links |

Control test pass rate | whether controls work, not whether they exist | test logs, sampling outputs |

Evidence retrieval time | whether you are audit-ready in practice | evidence library timestamps |

Remediation velocity | whether issues are closed on time | action tracking with due dates |

Review coverage | whether high-risk areas were actually reviewed | workflow approvals and logs |

If an AI tool cannot help you generate these metrics with traceable inputs, it is unlikely to help you demonstrate program effectiveness.

What this demands from compliance professionals

This shift does not reduce the need for compliance judgment. It raises the bar for it.

Three capabilities become central.

Governance design as a primary competency

The critical skill is not prompt writing. It is workflow architecture: designing how compliance outputs are created, reviewed, stored, and monitored so they remain defensible.

That means being able to translate regulator expectations into operating processes.

Human challenge becomes more important, not less

As AI increases output volume, the bottleneck moves to validation and decision-making.

High-performing teams define:

- what requires human approval, and at what level

- how challenge is documented

- how disagreements are resolved and recorded

- when outputs must be escalated to leadership

You need an internal “AI output acceptance standard”

Create a one-page internal standard that states what an AI-generated compliance output must include before it can be used.

Here is a practical template.

AI output acceptance checklist (copy/paste)

- scope is explicit (entity, time period, processes)

- sources are cited, dated, and versioned

- assumptions and thresholds are documented

- links exist to relevant risks, controls, and owners

- human reviewer is named and comments are recorded

- approval decision is recorded (approve, approve with conditions, reject)

- storage location is defined (evidence library, not email)

- retention and confidentiality are respected

This turns “AI help” into governed production.

A vendor scorecard focused on defensible outputs

If you want to compare tools without getting lost in feature lists, score them on the three bars.

Criterion (sentence case) | Why it matters | Minimum acceptable |

|---|---|---|

Source citation and export | enables traceability under audit | exportable sources annex |

Version control for policies and references | prevents “moving target” justifications | versioned, time-stamped references |

Audit trail (who, what, when) | enables reconstructing the process | immutable activity log |

Review and approval gates | enforces accountability | configurable approvals |

Linkage to controls and evidence | enables defensibility | mapping to controls and evidence |

Change impact handling | prevents silent drift | explain changes and triggers |

Data provenance | avoids unverifiable inputs | source system and timestamp |

Role-based access and segregation | supports governance | RBAC and separation options |

You can add “model explainability” or “advanced reasoning” later. If the basics above are missing, the tool will not hold up when scrutiny arrives.

How naltilia can help

If you are trying to move from AI answers to audit-ready compliance outputs, Naltilia is designed around structured workflows: regulatory risk assessment connected to controls, remediation actions, automated data collection, and workflow automation that preserves review steps and evidence. The practical value is not only faster drafting, but the ability to retrieve the “why,” the sources, and the audit trail behind each deliverable when an auditor, regulator, or leadership team asks.

If you want to discuss what defensible outputs look like for your Sapin II, ISO 37001, or UNE program, you can contact Naltilia.

This article is general information, not legal advice.