Most compliance teams have already felt the jump: a general-purpose LLM (ChatGPT, Claude, Le Chat) can draft, translate, summarise, and structure information faster than any junior analyst. Used well, it is genuinely useful.

But there is a trap: mistaking a great conversational interface for an operating system.

Compliance is not primarily a writing problem. It is a governance problem.

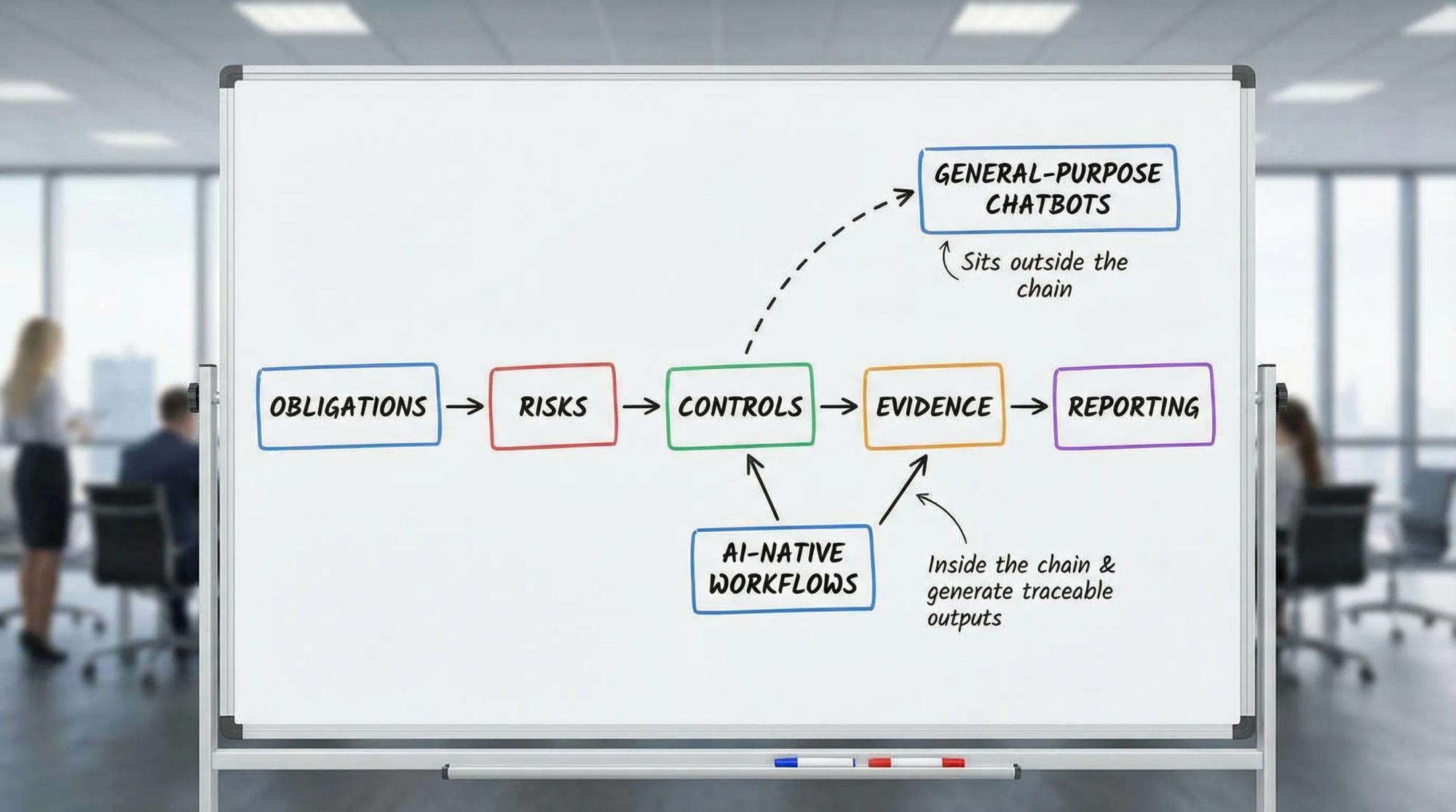

It is the discipline of turning obligations into identified risks, identified risks into controls, controls into evidence, and evidence into a compliance management system in continuous improvement. All this must remain defensible months or years later, under scrutiny. That “later” part is what most chatbot-style deployments miss.

What general-purpose llms are great at in compliance (and how to keep it safe)

General-purpose LLMs shine when the work product is text and the risk is manageable with review. In practice, that includes:

- Summarising a regulation, a guidance note, or a court decision for internal circulation

- Rewriting a policy section in clearer language for frontline teams

- Drafting training scripts and scenario vignettes (then validating with subject matter owners)

- Translating guidance for France and Spain rollouts, with legal review

- Brainstorming control ideas for a given risk scenario

- Turning a long investigation file into a structured narrative (timeline, actors, allegations, evidence list)

The reliability issue is not that LLMs “lie” on purpose, it is that they can produce plausible text without showing you the underlying chain of sources and decisions. So you need a simple protocol for any output that could later be used as compliance evidence.

This is also where data protection and confidentiality rules matter. If you cannot share a dataset with a consumer tool, do not.

Why “ChatGPT for compliance” hits a ceiling

When people say “chatGPT for compliance,” they often mean: take a powerful LLM, add a compliance-flavoured prompt, wrap it in a UI, and let the team ask questions.

That is valuable up to a point, because a chatbot is fundamentally an interaction model: a user asks, the model answers, the user decides what to do next.

In low-stakes knowledge tasks, that is enough.

In compliance, it quickly runs into structural limits that show up during audits, AFA reviews, ISO certification work, internal investigations, or board scrutiny.

The five structural gaps that matter in audits

A chatbot deployment typically struggles with:

- No embedded accountability: who approved what, when, and based on which internal sources?

- No evidence chain: what documents were used, which version, and how were conclusions reached?

- No operational continuity: risks, actions, owners, deadlines, test results and exceptions live elsewhere

- No built-in controls over the AI itself: permissions, segregation of duties, escalation rules, audit logs

- No loop closure: a suggestion is not an implemented control, a draft is not a monitored obligation

The result is often more text, not more compliance. Sometimes it is worse: an eloquent answer can feel like certainty even when it misses context, internal policy nuances, or the latest decision trail.

Compliance deliverables are not “answers”, they are traceable systems

If your scope includes France’s anti-corruption framework, the heart of the Sapin II approach is not a single policy document, it is a set of program elements that need to work together in practice (risk mapping, code of conduct, third-party due diligence, controls, training, whistleblowing, sample testing, monitoring). The legal text is accessible on Légifrance, and the AFA publishes guidance that repeatedly emphasises effectiveness and documentation (AFA resources).

In Spain, the same logic is reflected in criminal compliance practice: UNE 19601 is designed to help organisations prevent and detect criminal risks and demonstrate a credible management system (it is commonly discussed alongside Article 31 bis of the Spanish Penal Code, available via the BOE). For competition compliance, UNE 19603 provides a risk-based structure for “management risk analysis” in antitrust, with governance, implementation, monitoring, and continuous improvement as the backbone.

Standards vary, but the common denominator is consistent:

- You must show how you identified the risk

- How you decided what to do about it

- How you implemented controls

- How you tested whether they work

- How you corrected issues

- How leadership oversaw the whole system

A conversational tool can help you describe these items. It does not, by itself, run them.

What an AI-native compliance workflow is (and why it changes the outcome)

An AI-native workflow flips the logic.

It does not start with “what can the model answer?” It starts with “what must the compliance function deliver and prove?”

Instead of a standalone chat, you build a system where AI is embedded inside the operating reality of compliance: the tasks, the evidence, the approvals, and the reporting.

Practically, that means the AI works on top of a compliance data model, not on top of a blank prompt. The data model typically includes:

- Obligations (by framework and jurisdiction)

- Risks (taxonomy, scenarios, inherent and residual assessment)

- Controls (design, frequency, owner, scope)

- Tests (plan, sampling, results, exceptions, remediation)

- Evidence (document type, version, retention, link to control/test)

- Decisions (approvals, exceptions, rationale)

Once that exists, AI can be used as an executor inside the workflow, not as an external advisor.

quick comparison: chatbot outputs vs compliance-grade outputs

Question you ask | Chatbot gives you | AI-native workflow can produce |

|---|---|---|

“do we need a gifts policy?” | a draft policy | a policy draft linked to your risk scenario, approval workflow, training assignment, and evidence requirements |

“how do we test third-party due diligence?” | test ideas | a test plan with sampling logic, assigned owners, due dates, captured results, and an exceptions log |

“write an audit response” | a narrative | an evidence pack: narrative plus traceable links to controls, versions, test results, and sign-offs |

This is the shift from conversational to executable.

Specialised agents: what they are in compliance terms

“Agent” is an overloaded word. In compliance, the useful definition is simple: a purpose-built executor that performs a specific task end-to-end, under constraints, and produces traceable outputs.

Examples that actually map to compliance work:

- a policy classifier agent that maps internal policies to a control framework (ISO 37001, UNE 19601, UNE 19603) and highlights missing sections

- a risk drift agent that monitors your risk register for stale scoring, missing owners, or inconsistent residual risk logic

- an audit pack agent that compiles evidence for a specific control set, checks versioning, and flags gaps before you hit “send”

- a remediation agent that turns a finding into assigned actions with deadlines, escalation rules, and status evidence

- a control design reviewer agent that checks a proposed control against your internal control standard and routes for approval

The point is not “smarter text.” The point is disciplined execution with guardrails.

template: agent design sheet (copy and reuse)

Field | Fill in like this |

|---|---|

agent purpose | “compile evidence for AFA audit control set A and flag gaps” |

trigger | “monthly schedule” or “when audit request is opened” |

allowed actions | “read evidence library, create checklist, draft pack” (avoid “approve” by default) |

prohibited actions | “delete evidence, change scores, close findings” |

data sources | “policy repository v2, control library, HRIS export, vendor tool” |

required traceability | “every output line links to a source object and version” |

human review | “control owner approves, compliance validates, legal reviews if external” |

audit log | “who triggered, what changed, what was exported, when” |

If you cannot fill this in clearly, you do not have an agent yet. You have a prompt.

A simple litmus test: can you replay the logic on demand?

When regulators, auditors, or external counsel ask questions, they are rarely asking for better writing. They are asking whether the program is coherent and evidenced.

Run this test internally:

checklist: the “regulator knocks tomorrow” playback

- Can we show the specific risk scenario (not just a category) and when it was last reviewed?

- Can we map that risk to the applicable obligations (France and Spain where relevant)?

- Can we name the control owner and the operational process where the control actually happens?

- Can we show the latest control test result and the sampling logic?

- Can we show exceptions and remediation actions, with dates and closure evidence?

- Can we show what changed since the last review (process change, new market, new third party, new product)?

- Can we show escalations and the decisions made, including rationale?

A chatbot can help you narrate answers. An AI-native workflow helps you have the answers ready and consistent.

Governance for AI in compliance: what “defensible” looks like

If you operate in the EU, you already know the direction of travel: more expectation of risk management, documentation, and oversight for AI systems (see the EU AI Act on EUR-Lex). Regardless of whether a specific use case is “high-risk,” the compliance function should behave as if it may have to explain its tooling choices.

In practice, defensible AI use in compliance usually includes:

- data access control (least privilege) and segregation of duties

- clear rules on what data can be processed and where it is stored

- source traceability for any output used in policies, investigations, or audits

- human review checkpoints for decisions, scoring, and external communications

- audit logs for model use, exports, and key workflow events

- retention rules aligned to your legal holds and record management

If you only have “prompt history,” you are missing the governance layer.

How Naltilia can help

If you are ready to move from “LLM answers” to “audit-ready execution,” Naltilia focuses on AI embedded in compliance workflows: risk assessment, remediation actions, tailor-made policies, automated data collection, effectiveness tests and workflow automation. The goal is not to generate more text, it is to connect risks, controls, owners, and evidence so you can replay decisions and prove effectiveness .

If this is the direction you want to take, you can explore Naltilia at naltilia.com.

Closing thought: the future is executable

General-purpose LLMs are a leap forward for knowledge work, and they will remain useful assistants for compliance teams.

But compliance maturity is measured by what you can prove, consistently, over time. The teams that win will not be the ones with the best prompts. They will be the ones who operationalise governance, turn “management risk analysis” into a living system, and can replay the logic behind every key control under pressure.

This article is general information, not legal advice.