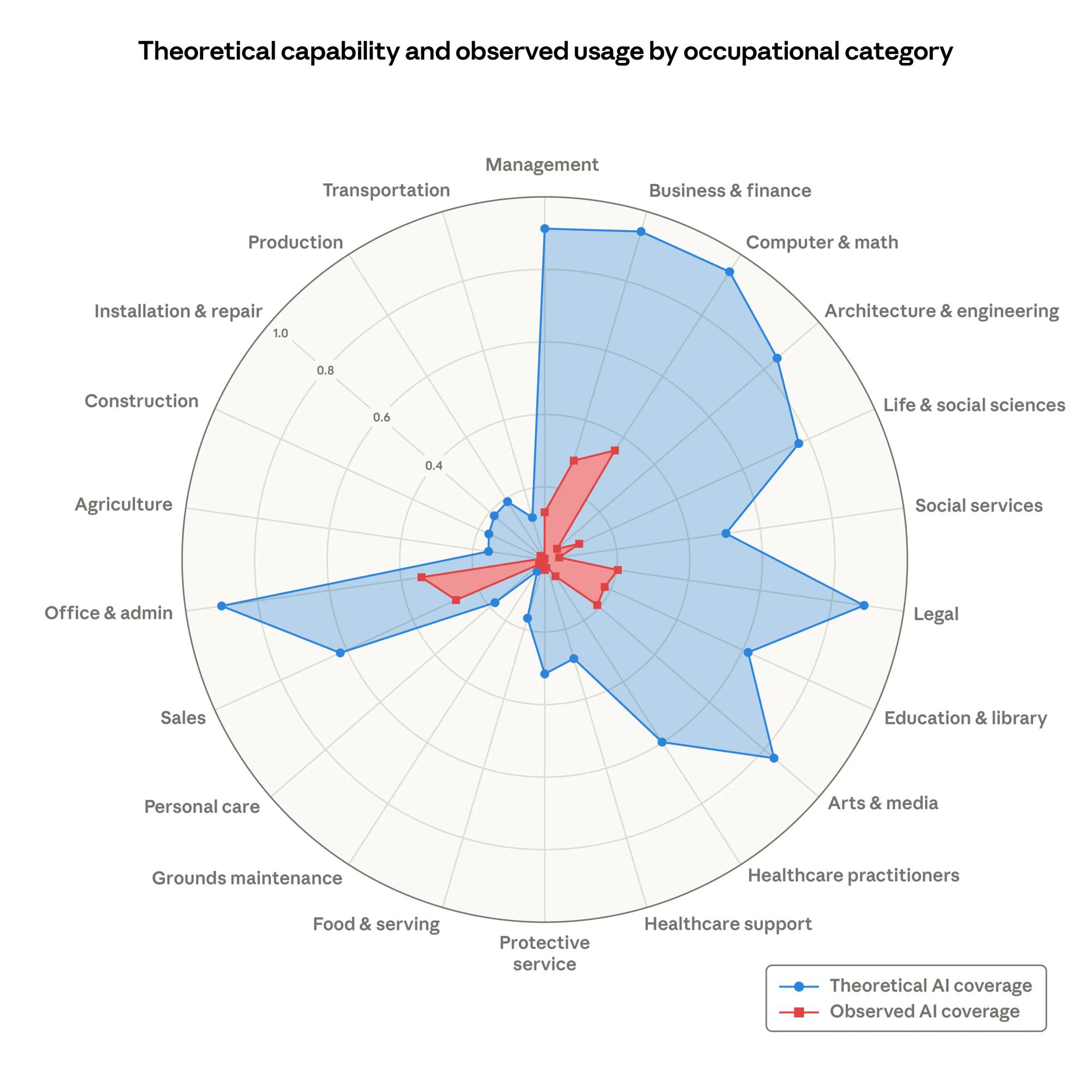

Anthropic’s new research on AI and jobs is a useful reality check for compliance leaders, not because it predicts mass layoffs, but because it measures something most debates ignore: the gap between what AI could do and what people actually use it for.

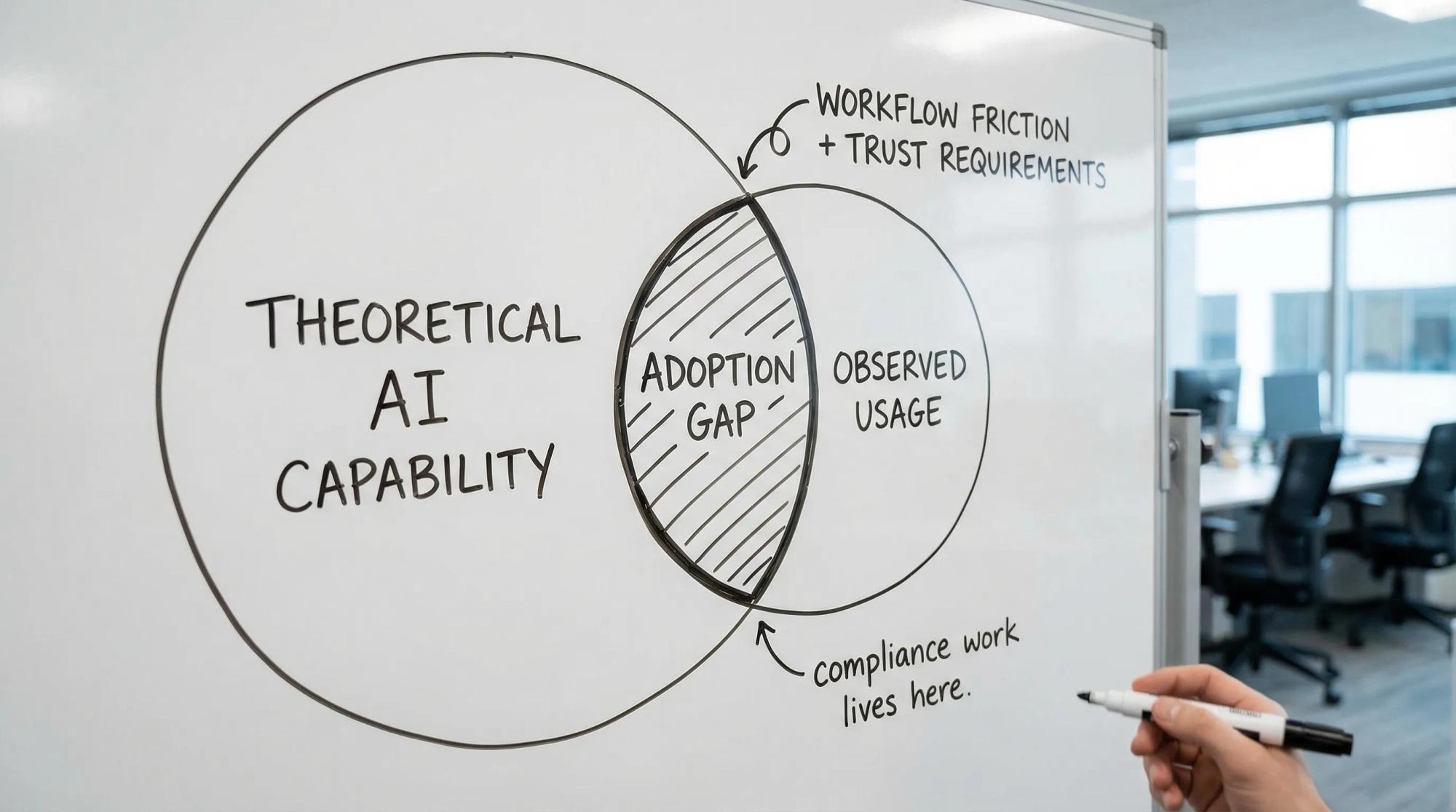

In Labor market impacts of AI: a new measure and early evidence (published March 5, 2026), Anthropic introduces “observed exposure,” combining theoretical LLM capability with real usage patterns (and weighting automated, work-related usage more heavily). Their core finding is simple: AI is far from its theoretical capability in real workflows. That “uncovered area” is where compliance work lives.

Source: Anthropic research report

What the Anthropic report changes for legal and compliance teams

A lot of commentary treats AI adoption as a matter of individual motivation (curious professionals adopt, conservative ones resist). The report points in a different direction.

It shows a persistent, measurable distance between:

🔵 what AI could already do in a job🔴 what people are actually using it for

And in Legal, the gap is massive.

For compliance teams, the takeaway is not “LLMs are weak.” It is: general-purpose chat interfaces do not match systematic, evidentiary work.

2 structural blind spots that keep AI adoption low in compliance

The adoption gap in legal and compliance is often misdiagnosed as cultural. In practice, two structural blind spots explain most of it.

Blind spot 1: teams buy chatbots, but compliance runs on systems

Ask a question, get an answer, is a thin slice of compliance.

A defensible compliance program under frameworks such as France’s loi Sapin II (and AFA expectations), ISO 37001, UNE 19601, or UNE 19603 is built on artifacts you can revisit years later:

- risk mapping by activity, geography, and third parties

- translation of non-compliance risks into operational controls

- evidence collection, verification, and retention

- traceability (who did what, when, based on what)

- repeatable testing of control effectiveness, not just control design

Most of the workflows compliance teams rely on today were designed long before AI was part of the picture.

A chat interface can draft a policy clause. It cannot, on its own, maintain a risk-control-evidence chain.

If the tool cannot connect AI output to your compliance operating model, adoption stalls because using it creates extra work (copy-paste, reformatting, revalidating).

Blind spot 2: in regulated environments, trust is a design requirement

In many roles, a wrong answer is a productivity issue.

In compliance, a wrong answer can become:

- a failed audit (internal, external, certification)

- a regulatory challenge on program effectiveness

- reputational damage

- executive exposure

So the real question becomes: could you defend the AI-assisted decision to a regulator or auditor in three years?

That pushes you toward AI that is:

- traceable in sources (or clearly limited when it cannot cite)

- auditable in outputs (versioning, retention, approvals)

- governed (who can use it, for what, with what checks)

- embedded in your processes, not parallel to them

7 blind spots blocking AI in compliance, and how to build audit-ready evidence

These are the adoption blockers that show up in real programs (France and Spain included). Each comes with a concrete fix you can implement without changing your whole stack.

1) Using AI for “answers” instead of for evidence-heavy workflows

Symptom: the team tests AI on legal interpretation questions, gets nervous about hallucinations, and stops.

Fix: start where AI reduces friction while keeping humans in charge.

Best first workflows are typically:

- obligation extraction into a structured register (with human validation)

- drafting first versions of policies, procedures, control narratives

- converting scattered evidence into indexed, searchable records

- preparing audit packs (assembling what already exists, highlighting gaps)

The win is not “AI decided.” The win is “AI reduced the time to assemble and verify.”

2) No separation between control design and control effectiveness testing

Symptom: AI drafts controls and the organization treats that as “done.”

Fix: keep a strict split:

- design: what the control is supposed to do (policy, workflow, threshold, approval)

- testing: how you prove it worked (sampling rules, evidence criteria, exceptions, remediation)

AI can help with both, but your governance must label which is which, and store evidence accordingly.

3) No “minimum defensibility standard” for AI-assisted work

Symptom: AI is used inconsistently, sometimes with no record of prompts, inputs, or reviewer.

Fix: define a minimum evidence record for any AI-assisted compliance artifact.

If you cannot capture this lightly, AI will remain “off the record,” and leadership will (rationally) resist scaling it.

4) Risk mapping remains a static spreadsheet, so AI has nothing stable to anchor to

Symptom: you run a risk assessment workshop, update a heatmap once a year, and the rest of the year everyone works from memory.

Fix: convert the risk map into a living object with three stable identifiers:

- a risk ID and scenario definition

- linked controls (preventive/detective, owner, cadence)

- linked tests and evidence

This is also where cross-border complexity is easiest to manage: you can align a core scenario library, then add France- and Spain-specific overlays (Sapin II specifics, UNE 19601 offence categories, UNE 19603 antitrust scenarios) without duplicating everything.

5) Third-party due diligence is treated as intake paperwork, not an owned operating process

Symptom: due diligence happens at onboarding, then quietly decays. Evidence is scattered across procurement, legal, and finance.

Fix: run third-party oversight as a lifecycle with operational ownership:

- onboarding risk tier and required controls

- contract clauses mapped to controls (not just “legal terms”)

- periodic re-review triggers

- remediation tracking for third parties (not just internal actions)

A concrete example: if marketing wants to use geo-distributed social publishing services such as TokPortal for posting TikToks in target countries, treat it like any other third party. Define what you need to evidence (ownership, access control, data handling, brand/account governance), who approves, and how you re-check it.

6) Training fatigue is solved with “more content” instead of measurable behavior

Symptom: training completion rates exist, but you cannot show whether behavior changed.

Fix: define 2 to 3 outcome signals per training topic (and capture them as evidence). Examples:

- gifts and hospitality: approval rate vs. threshold breaches, register completeness

- antitrust: pre-clearance usage for trade association meetings, minutes checks

- speak-up: time to triage, substantiation rate, feedback timeliness

AI can help draft micro-scenarios, localize content, and generate manager discussion guides, but your program must measure outcomes or auditors will see “activity, not effectiveness.”

7) Reporting to leadership stays narrative-only, so resourcing stays political

Symptom: the board hears “we updated policies,” not “risk decreased and here is the proof.”

Fix: adopt a small set of evidence-backed KPIs that connect directly to program effectiveness. Even before dashboards, define consistent rules for:

- control testing coverage (what percent of key controls were actually tested)

- exception rate and remediation velocity

- third-party coverage by tier

- audit evidence retrieval time (a simple but revealing metric)

Anthropic’s “observed exposure” idea is useful here: you can measure your own “observed automation” in compliance. Not to cut headcount, but to show increased capacity and reduced operational drag.

This is how you avoid the most common trap: scaling AI because it demos well, then discovering it cannot be defended.

Frequently asked questions

Why is AI adoption slower in legal and compliance than in other knowledge work? Because compliance is evidentiary and must be defensible over time. Tools that only provide conversational answers do not produce the traceability, workflow, approvals, and retention required for audits and regulators.

What is the safest place to start with AI in a Sapin II or ISO 37001 program? Start with friction removal where humans still decide: evidence collection, obligation extraction into registers, first drafts of policies and control narratives, and audit pack assembly with clear reviewer sign-off.

How do we make AI outputs auditable? Treat AI-assisted artifacts like any other controlled document: capture inputs, the AI operation (prompt/tool), outputs, human review, approval, versioning, and retention location. If you cannot reproduce or explain it, do not scale it.

How naltilia can help

Naltilia is designed for the “adoption gap” highlighted by Anthropic: not AI as a standalone chat, but AI embedded into compliance workflows. It helps teams operationalize regulatory risk assessment, translate risks into owned remediation actions, automate data and evidence collection, and run workflow automation with traceability. The goal is to reduce paperwork friction while improving audit readiness across programs aligned to loi Sapin II, ISO 37001, and UNE 19601/19603.

Contact Naltilia to discuss your workflow and evidence priorities: https://calendly.com/iratxe-naltilia/30min

This article is general information, not legal advice.